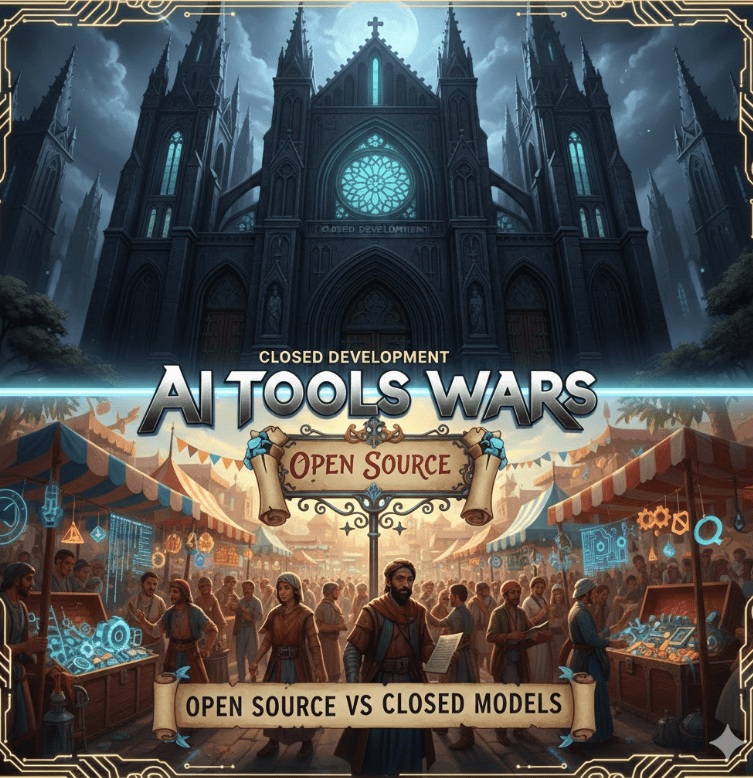

Every few weeks there’s a new take declaring that AI has made junior engineers obsolete, senior engineers redundant, and teams magically “10x.”

That story is lazy.

And dangerous.

AI didn’t remove the need for engineers. It exposed which parts of engineering were never that valuable to begin with.

What’s actually happening is a compression of execution. The typing, scaffolding, and boilerplate are cheaper than ever. Judgment, architecture, and responsibility are not. If anything, they’re more expensive—because the blast radius is larger.

This forces a reset. On roles. On metrics. On how we train people. On what “good” looks like.

Let’s talk about what to do.

For Engineering Leaders (CTOs, VPs, EMs)

Redesign junior roles instead of killing them

If your juniors were hired to crank out CRUD and Stack Overflow glue, yes—AI just ate their lunch.

That’s your fault, not theirs.

Stop hiring “Keyboard Cowboys” –> Hire juniors who can:

- Drive AI tools deliberately

- Reason about outputs

- Write tests that catch subtle failures

- Explain tradeoffs in plain language

Make AI usage explicit in job descriptions and interviews. Ask candidates how they validate AI output, not how they prompt it. The junior of the future is an operator and a critic, not a typist.

Make fundamentals non-negotiable

AI is great at producing answers.

It’s bad at knowing when they’re wrong.

Your review culture must check understanding, not just correctness. Ask:

- Why was this approach chosen?

- What fails under load?

- What breaks when assumptions change?

Reward engineers who can debug, profile, and reason under failure.

That’s where AI still stumbles—and where real engineers earn their keep.

Treat AI as infrastructure, not a toy

If AI tools are everywhere but governed nowhere, you already have a problem.

Standardize:

- Which tools are allowed

- How prompts are shared and versioned

- How outputs are validated

- How IP, data, and security are handled

Ignoring this creates shadow-AI, silent leaks, and unverifiable decisions. You wouldn’t let people deploy random databases to prod.

Don’t do that with AI.

Shift metrics away from “lines shipped”

Output metrics are (now) meaningless. AI inflates them by design.

Measure what actually matters (DORA style):

- System quality / DevEX / Even Developer happniess

- Incident recovery time

- Change failure rate

- Test coverage and signal

- Architectural clarity

AI can help you ship faster. It cannot guarantee outcomes. Your metrics should reflect that reality.

Invest in orchestration skills

The future senior engineer doesn’t just write code. They design systems that coordinate intelligence.

Encourage work on:

- Agent pipelines

- Evaluators and guardrails

- Feedback loops

- Tooling that checks AI against reality

This is the new leverage layer. Treat it as a core skill, not a side experiment.

Protect deep expertise

Don’t flatten everyone into “full-stack generalists.”

You still need domain owners:

- Performance

- Security

- Data

- Infrastructure

AI boosts breadth.

Humans anchor depth.

Lose that balance and your systems will rot quietly—until they fail loudly.

Rebuild onboarding

Assume new hires will use AI heavily from day one.

Onboarding should teach:

- How your systems actually work

- Why key decisions were made

- What invariants must not be broken

- How to validate AI output against production reality

Otherwise you’re training people to copy confidently—and understand nothing.

For Engineering Teams

Use AI to kill boilerplate, not thinking

Let AI scaffold, refactor, and generate tests.

Humans own:

- Architecture

- Invariants

- Edge cases

- Failure modes

If AI is making your design decisions, your team is already in trouble.

Practice “AI-assisted debugging,” not blind trust

Always reproduce. Always measure. Always verify.

Treat AI like a fast junior engineer: helpful, confident, and occasionally very wrong. If you wouldn’t merge their code without checks, don’t do it for a model.

Document intent, not just code

Code shows what the system does. It rarely shows why.

Write down:

- Why the system exists

- What tradeoffs were made

- What must never change

This documentation becomes the truth source when AI generates plausible nonsense at scale.

Continuously reskill horizontally

Each engineer should expand into at least one adjacent area every year:

- Infra

- Data

- Product

- Security

AI lowers the learning barrier. Use that advantage deliberately, or waste it.

For Individual Engineers

Master one thing deeply

Pick a core domain and become genuinely hard to replace there.

Depth is your moat. AI makes general knowledge cheap. It does not replace hard-earned intuition.

Learn how AI systems fail

Hallucinations. Bias. Brittle reasoning. Silent errors.

Knowing failure modes is more valuable than knowing prompts. Engineers who understand where AI breaks will outlast those who just know how to ask nicely.

Build visible, real projects

Portfolios beat resumes.

Show:

- Systems you designed

- Tradeoffs you made

- How you used AI responsibly

- How you validated results

Real work cuts through hype instantly.

Think in systems, not tickets

The future engineer isn’t judged by tasks completed.

They’re judged by how well the whole machine runs under stress.

Bottom Line

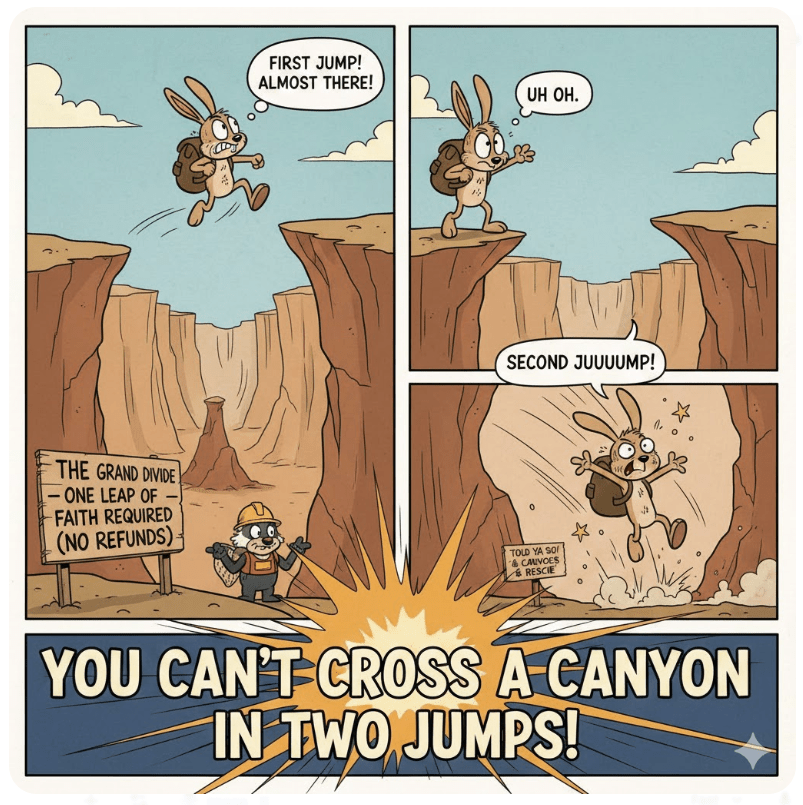

AI compresses execution time.

It does not compress judgment, responsibility, or accountability.

Teams that double down on thinking, architecture, and learning will compound.

Teams that chase raw output will ship faster…

…straight into walls.

The choice is not whether to use AI.

The choice is whether you’re building engineers—or just accelerating mistakes.

Share only with good friends: