It started as a simple idea my son brought up: Can we make a web app that counts our pull-ups during our pull-up games?

Turns out, teaching a machine to recognize human suffering is both hilarious and complicated.

What began as a “let’s make a quick pull-ups app” spiraled into an intense journey through computer vision, browser quirks, and a few accidental infinite loops that made our laptop sound like a jet engine.

The “Simple” Goal

I wanted to automatically count pull-ups using a web camera.

Easy, right?

Just detect a human, see when they go up and down, and count.

Except that every word in that sentence hides a trap.

“Detect a human” is not trivial. “See when they go up” depends on camera angles, lighting, body type, and your definition of “up.”

And “count” — well, let’s just say you need to make sure it doesn’t count every micro-twitch as a rep or you’ll end up feeling like a superhero with 243 pull-ups in 30 seconds.

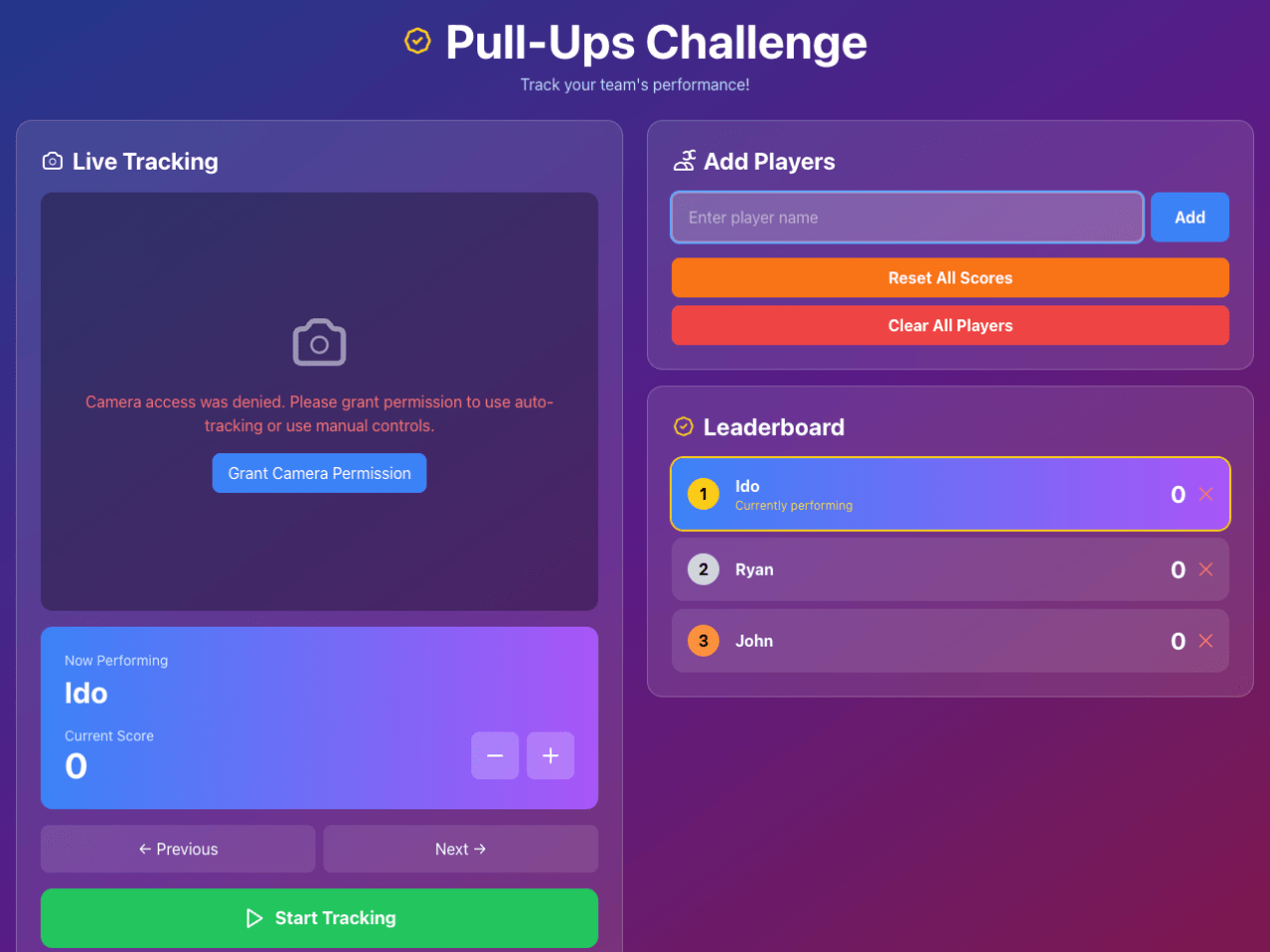

I used this testing app to detect the pull-ups:

So the app had to:

- Access cameras (front and back, because apparently everyone has opinions about their “better side”).

- Detect poses in real-time.

- Tell the difference between “hanging like a sloth” and “actually doing work.”

- Support multiple players.

- Run on devices ranging from shiny new iPhones to ancient Androids with cracked screens and questionable camera sensors.

The Tech Stack: KISS (Keep It Simple, Stupid)

I went with the minimalistic “one HTML file to rule them all” approach.

No build step.

No bundler.

No 700MB of node_modules.

Just the good stuff:

- React 18 via CDN for light state management.

- Tailwind CSS for “I don’t want to write CSS” speed

- MediaPipe Pose for pose detection magic

- Canvas API for overlays and drawings

- getUserMedia API for camera access

The Beating Heart: Pose Detection

The soul of this project lives in the detectPullUp() function.

MediaPipe spits out a bunch of landmarks — nose, shoulders, elbows, wrists — and I do some math to figure out if the head is above the wrists and if the arms are extended.

// Simplified detection logic

const headAboveWrists = headY < wristY - 0.05;

const armsExtended = avgArmAngle < 35;

const isUp = headAboveWrists;

const isDown = armsExtended;

Then I wrapped it all in debouncing logic to prevent the app from counting every twitch, hiccup, or shadow as a pull-up.

Because when you’re tired, even your nose wobbles.

Reality Hits Harder Than a Missed Rep

In theory, this all sounded elegant.

In practice, it was chaos.

Camera Permissions: Browsers guard the camera like it’s a nuclear launch button. HTTPS is mandatory, permissions are moody, and the UI for asking for access changes every six months.

Multi-Camera Support: Some devices let you switch cameras easily.

Others pretend the second camera doesn’t exist. Anyway, we tested it on iPhone and it’s fine. That’s good enough for our ‘MVP’.

Performance: Real-time pose detection on 30+ FPS video isn’t for the faint of CPU.

I had to throttle frames, skip calculations, and give the browser breathing room before it passed out.

Lighting: The model’s accuracy drops faster than your willpower during the 10th rep if the lighting isn’t good. Pro tip: daylight beats “romantic gym mood lighting.”

Some Cool Engineering Bits

Real-Time Loop: The app continuously grabs frames, detects poses, updates the UI, and draws funky skeletons on a canvas — all while pretending it’s easy. Luckily for us, the modern CPUs on mobile phones are strong. Really strong.

Smart State Management: React state for the UI, refs for the fast stuff.

Keeps everything in sync without melting your render loop.

Visual Feedback: You get live overlays showing the pose, angles, and the app’s current guess at whether you’re “up,” “down,” or “weirdly in between.”

That was very helpful as we debug the app and wanted to answer ‘why it’s not counting my pull-ups but only yours?’

Testing the Madness

We made a testing rig that runs pose detection on pre-recorded videos. That let me adjust thresholds and prove that the algorithm doesn’t count head scratches as pull-ups.

There’s also a camera test page for debugging permissions, angles, and lighting — because nothing says “Saturday night” like debugging pose detection with a flashlight.

Lessons Learned

- Browser APIs are magical but inconsistent.

Every device is a new adventure in “why doesn’t this camera work?”

At the end, we tested it only on iPhone(s), Windows laptop, and an old MacBook. - Computer vision is humbling.

It’s not about perfection — it’s about “good enough to impress your gym buddies”, which in this case, it’s perfect. Literally. - Performance tuning is hard.

Frame skipping and timing tweaks can make the difference. A big one. - Simple deployment rules.

One HTML file. No server. No nonsense. Just open it and flex.

The Result

It works.

It actually tracks pull-ups in real-time, supports multiple players, shows a live leaderboard, and gives you visual proof of your effort.

Even better — it makes working out fun.

You can challenge friends, record your sessions, and argue about whose form is worse, all in the browser. You can even record the session so you can show it later to another friend.

You can try it yourself at Pull-Ups Game — just allow camera access, stand in frame, and bring your best effort. Bonus points for dramatic lighting and Rocky-style music.

This little project reminded me that the browser is a powerful platform.

Between the APIs, ML models, and sheer accessibility, you can now build things that once needed a research lab — in a single HTML file.

And maybe, just maybe, that’s the best kind of pull-up: the kind that pulls the web forward.

Be strong 💪🏼

Discover more from Ido Green

Subscribe to get the latest posts sent to your email.